An Overview

Overview

Note: This feature incurs additional fees.

With your business growing, the need to analyze data from multiple sources becomes more critical. It helps you answer questions, such as what is your ROI by customer, or project? Are your customer support initiatives improving your customer lifetime value? Often, these are questions that you cannot answer by analyzing data from a single source.

The Data Warehouse (DWH) is an enterprise grade tool that allows the scheduled extract of large volume data. It supports the initial export of millions of records which are then followed by incremental (delta) files export based on a detected changes, generating multiple sequential files for each exported entity.

Content:

- What are the benefits of using DWH Export?

- Supported Export Types

- Incremental Updates

- Secured Data

- Transfer of Files

- Data Warehouse Export & File Structure

- Error Detection

What are the benefits of using DWH Export?

- Share work management data with other BI external sources (e.g. Salesforce.com)

- Keep historical data-snapshots and perform trend analysis

- Perform complex queries and in-depth analysis

- Use the reporting tool of your choice

Supported Export Types

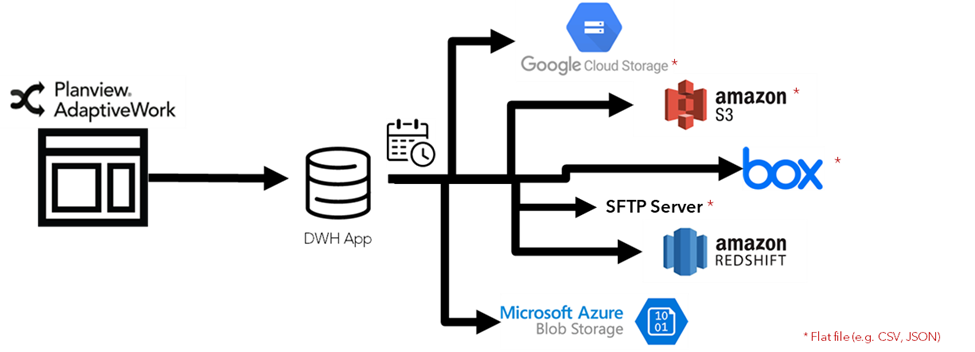

AdaptiveWork supports export of data to:

- Amazon Redshift

- Amazon S3 (as a flat file, e.g. CSV, JSON) - flat files must first be saved to Amazon S3, then downloaded locally if needed.

- Box (as a flat file, e.g. CSV, JSON) - a Box account is required before you can configure the export functionality.

- Azure Blob Storage

- SFTP Server (as a flat file, e.g. CSV, JSON)

- Google Cloud Storage (as a flat file, e.g. CSV, JSON)

Incremental Updates

For each entity type, AdaptiveWork stores a timestamp of the last export. The next time the export runs, it will get from AdaptiveWork all the entities that were created/ updated/ deleted since the previous run, and will export the delta records.

Secured Data

AdaptiveWork data is protected during the export process by Amazon security policies.

Transfer of Files

The transfer of files from the Data Warehouse is done in Push

Data Warehouse Export & File Structure

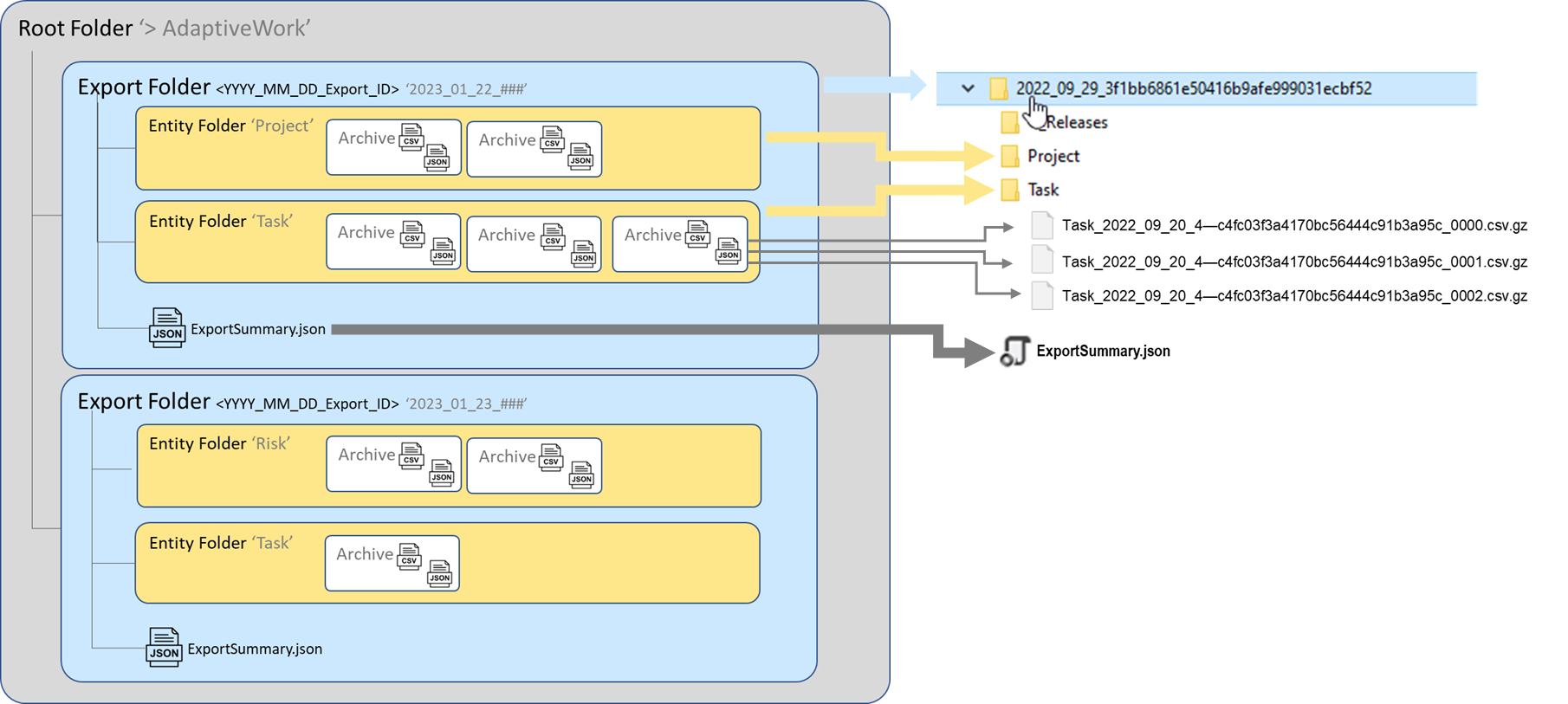

First Data Warehouse creates a root folder where all folders/files will be placed, called e.g. AdaptiveWork (the root folder name can be defined by the Admin user)

Every time an export is running, DWH creates:

- a new export folder for each export (folder name consists of the export date + unique export ID) that is placed within the root folder

- separate entity folders for each entity selected for export (if there are >0 records to be exported) that are placed within the export folder

- archives with data that was updated since the last export are placed within the entity folde

- each archive consists of only JSON/CSV file

- the file extension is json.gz or csv.gz

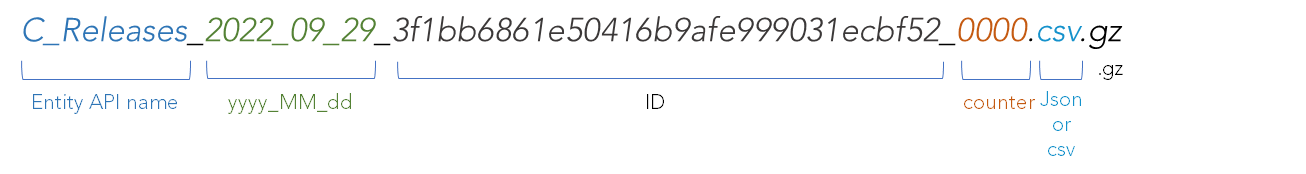

- the archive file format is entity api name + yyyy_MM_dd + id + counter,

- the export generates multiple sequential files as needed, each file containing up to 1000 records (except for BOX that can contain up to 5000 records)

- first N archives contain new and/or updated records since the last export

- files and folders are created in chronological order

- last N archives contain deleted items, which include the record identifier, date of deletion and the user who deleted the item. Most of the entities are stored in the Recycle Bin, meaning that when the user deletes it, the data is not deleted from the database, but logically marked as deleted. This means that the data can be restored by a user. Restored data appears in the next export as an update.

- DWH doesn't create folders and files for entities with 0 records. In case there are no records exported for a particular entity, the email notification and the export summary file will indicate zero total.

- DWH zips files, not folders

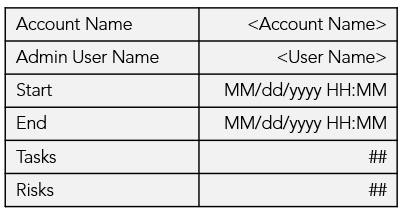

- export summary file with account details

(Account Name, Admin User Name), system information (Start and End date & time) and counters per entity (number of items received from the API)

Error Detection

In case an error is detected in the Data Warehouse export process, it stops processing and an email notification is sent to the user. A summary file with totals will not be created in this case.